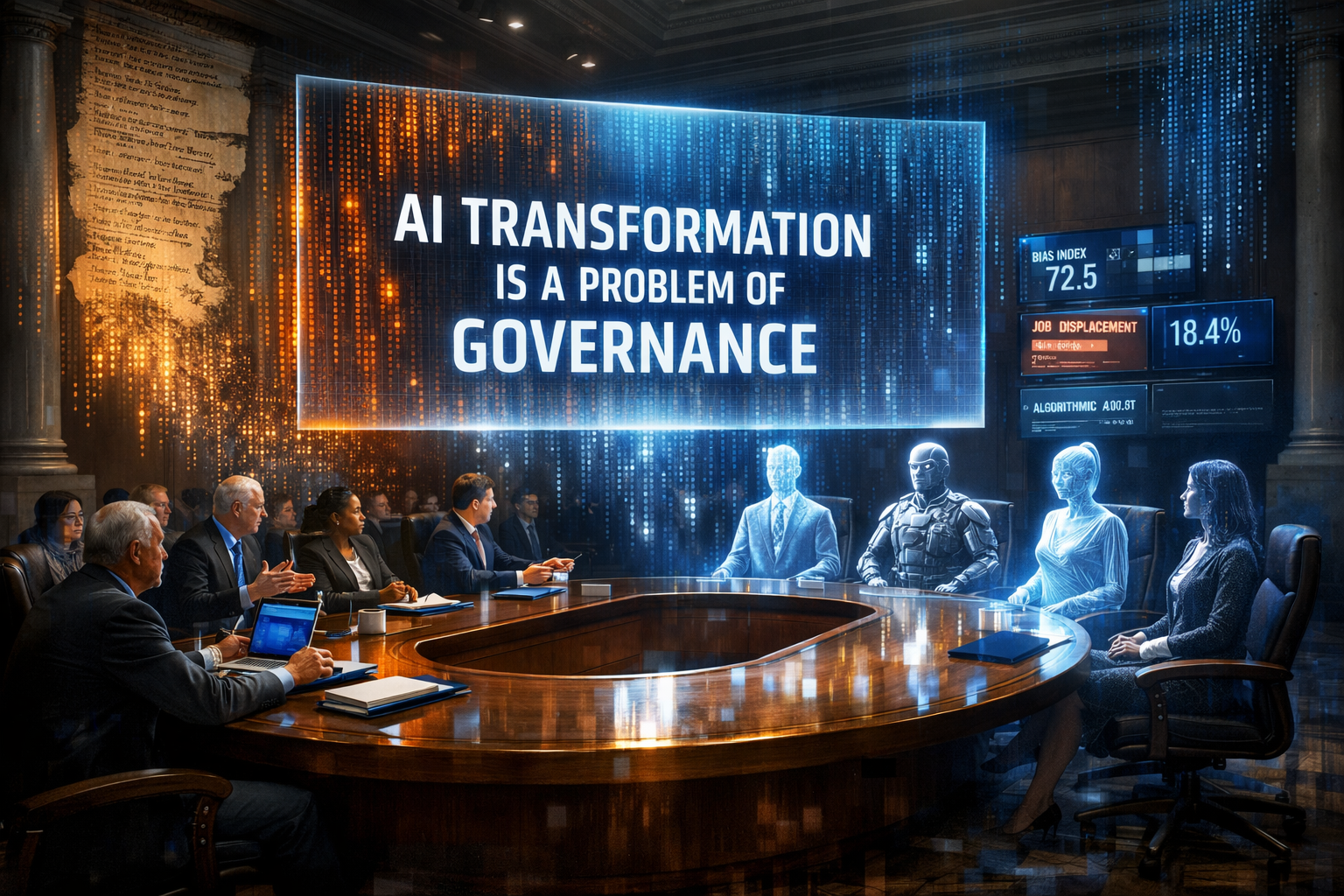

Ai transformation is a problem of governance

AI transformation is a problem of governance. As artificial intelligence revamps economies, societies, and daily life at an unprecedented pace, the fundamental challenge is not technological invention but the governance frameworks to channel it responsibly. Failing to bring in robust oversight, AI transformation risks entrenching inequality, undermining privacy, and destabilizing global systems.” In this article, we will briefly discuss why AI transformation requires immediate governance action, the costs of inaction, and how to build an effective policymaking framework.

Realizing the magnitude of AI redesign

AI AIansformatiAI involves embedding machine learning, generative models, and autonomous systems into everything — from healthcare diagnosis to financial trading, from manufacturing automation to creative industries. What started as limited use cases in the early 2010s has evolved into general-purpose AI capable of eclipsing humans at complex tasks. AI will add billions to global GDP by 2026, according to projections from top economists’ forums. While it’s spurring efficiency gains, it’s also upending the labor market, with entire categories of work on the verge of extinction.

The problem is that this transformation is not neutral. It centralizes power in the hands of a handful of tech oligarchs and nation-states with larger computational capabilities. Unlike earlier industrial revolutions, which involved mechanical inventions that changed how people worked or lived, AI’s self-imAI’sing capabilities include feedback loops that accelerate change exponentially. Governance lags because conventional regulation is designed for slow-moving industries such as automobiles and pharmaceuticals, and cannot keep up with algorithms that are updated daily. Where it sparkles in ways that make establishment outliers load one’s bag of career tricks with coals on the fire and the Caitlyn dance, this mismatch is what makes AI transformation a governance crisis: Who knows yet how to order when principles look after you damned near keep up with some magic parliament?

Key Governance Challenges with AI Deployment

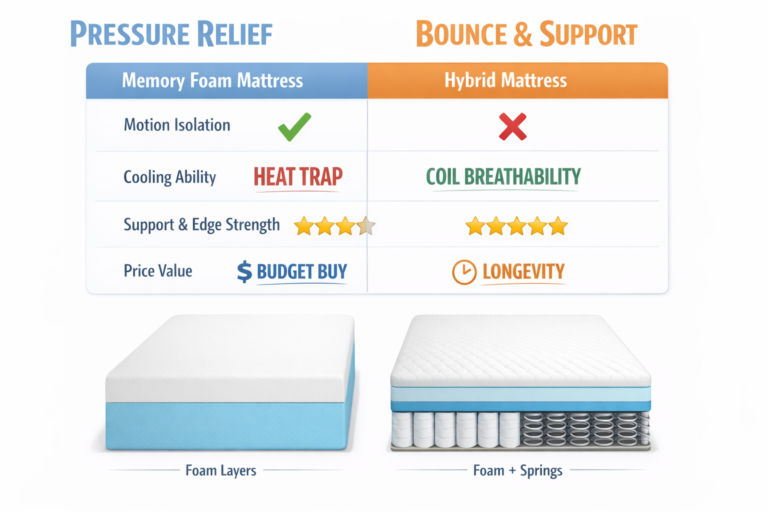

The key challenge here is the ethical vacuum. But AI systems trained on biased datasets do drive discrimination in hiring, lending, and law enforcement. For example, facial recognition software has been found to have racial bias in accuracy with several known wrongful arrests across countries globally. In the absence of mandatory audits and transparency goals, companies would be more focused on profit than equity, with governments forced to address the consequences reactively through lawsuits rather than proactively through standards.

Privacy erosion is another concern. AI relies on AI-assistant datasets, often gathered without clear consent. Surveillance capitalism leverages personal data to power predictive algorithms, threatening civil liberties. This raises a question: do citizens or corporations own their digital footprints? Authoritarian states use AI for mass surveillance and social scoring, exporting these models and fueling a governance race to the bottom.

Job displacement adds socioeconomic strain. And while AI will generate new jobs in data science and ethics oversight, it will also displace millions from routine cognitive and manual labor. There are retraining programs, though they can be spotty and underfunded. This is something governments need to act on (through universal basic income pilots or AI-driven productivity taxes), but political gridlock often prevents timely action. The result? Growing inequality that breeds populism and social unrest.

Geopolitical tensions make things worse. AI Defense is now a security issue, with major powers investing billions and restricting exports. This hinders global standards. For example, the EU’s AI AcAI, which emphasizes rights, differs sharply from more permissive approaches elsewhere. Without global agreements, AI could escalate hybrid warfare and trigger an autonomous weapons race.

Why Current Governance Falls Short

Traditional governance models operate on the presumption of linear progression and well-defined accountability. AI deAIes this. The second problem is that developers work in black-box environments, where even their creators cannot explain the decisions neural network algorithms have made. Liability questions need a solution: if an autonomous vehicle crashes or a medical AI makes an incorrect diagnosis, who can be held responsible — the programmer, the company that created it, or the user? This fragmented set of national laws gives rise to regulatory arbitrage, as firms can move to other jurisdictions.

In addition, the multistakeholder nature of AI — AI, private firms, academia, civil society, and governments involved — complicates decision-making. Tech lobbyists play a role in policy, and faith in the public interest diminishes, as scandals like data breaches and rigged algorithms shake elections. In developing economies, the digital divide makes matters worse: countries that lack infrastructure or expertise serve as laboratories for untested A.I., with little recourse when things go wrong.

These gaps expose AI transformation as, at heart, a governance problem. It calls for not only technical knowledge but also political will to weigh the benefits of creativity against the need for protection. In its absence, short-term successes obscure long-term systemic threats and even the existential fear that superintelligent AI might slip the leash on humans — once a fringe debate, now taken seriously by prominent researchers.

Pathways Toward EffecAIve AI Governance

Good governance begins with clear principles: Transparency, accountability, and inclusivity. Governments could also require impact assessments for high-AIsk AI systems, similar to environmental reviews for big infrastructure projects. It introduces third-party audits and explains AI requirements that guarantee humans will never lose oversight in fields such as justice or defense.

International cooperation is essential. A global AI governance body, under the auspices of agencies such as the United Nations, might be empowered to set baseline standards for data ethics and safety testing. Models do exist, such as the OECD AI Principles and the G7 Hiroshima Process, but they need to be enforced. Common regulations would lighten the load for multinational companies and avert a regulatory patchwork.

At the national level, specialized AI ministries or regulatory agencies — staffed by technologists, ethicists, and economists — can fill these expertise gaps. Data-driven AI initiatives for AI literacy and reskilling above the line — building partnerships to address workforce challenges during disruptive times — can help organizations adapt to change. On the positive side, tax incentives that stimulate ethical AI development — combined with penalties for noncompliance — would create a corporate bottom line linked to the common good.

Civil society also plays a critical role. Grassroots movements and independent watchdogs can exert pressure for citizen-centric policies, such as digital rights charts, that empower citizens to contest automated system decisions. Academia itself must bring AI ethics into its curricular fold, including ethics in education, to create an intellectually capable generation of governors who will develop and apply this technology rather than be consumers.

The Future: Where Creativity Meets ResponsibiliAI

AI transformation promises very much — curing diseases, slowing climate change, and unlocking scientific breakthroughs. But this potential depends on governance that anticipates risks, rather than simply reacting to them. History tells the story of ungoverned technologies, from nuclear energy to social media, that generate unwanted but predictable consequences when governance fails to keep pace with innovation.

Policymakers must act decisively. Aging levels are sorting AI. AI transformation is a governance imperative, harnessing its benefits without upsetting democracy, equality, and human values. The alternative — fragmented and reactive policies — is a recipe for chaos, with the powerful few designing the future of these technologies on behalf of the many.

To sum up, AI Transformation is a Governance problem because the technologies won’t self-regulate their societal impact. It requires visionary leadership, adaptive institutions, and global collaboration. AI continues to accelerate; the question isn’t how to build a smarter machine but whether we can govern one. The next decade will push our shared courage. There can be no failure; the stakes are nothing less than the fate of human civilization itself.

You may also read nowitstrend.